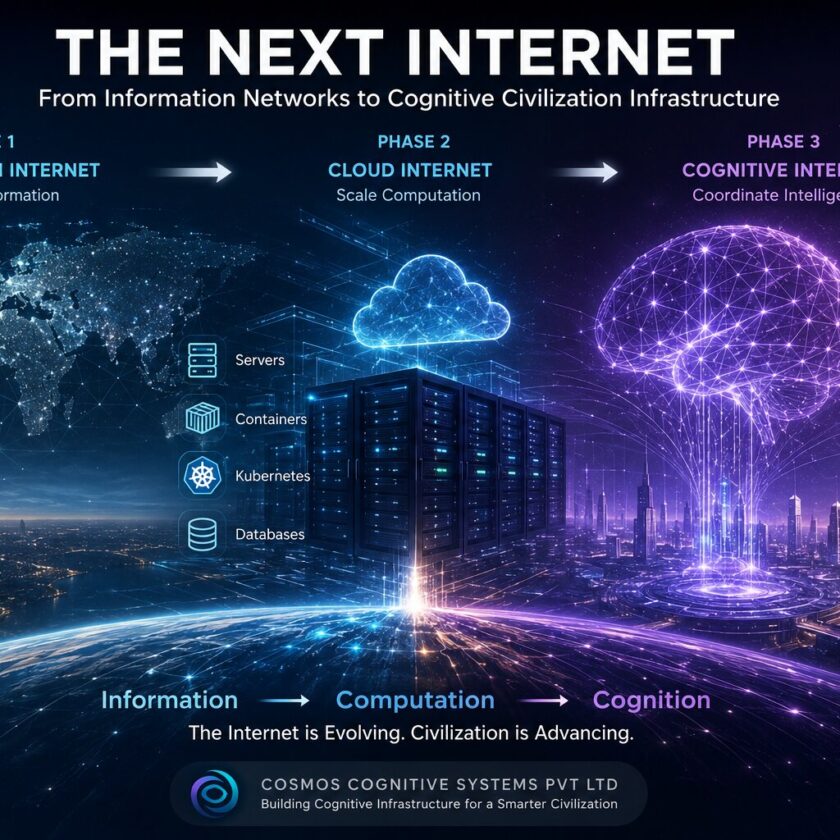

For two decades, modern computing has scaled through a simple assumption:

Contents

More intelligence requires more infrastructure.

More:

- servers

- GPUs

- storage

- cooling

- networking

- energy

- replication

- centralized orchestration

This model powered:

- cloud computing

- hyperscale infrastructure

- AI training systems

- internet-scale platforms

But a structural problem is now emerging.

As artificial intelligence evolves toward:

- persistent cognition

- multi-agent systems

- real-time reasoning

- world modeling

- autonomous coordination

the infrastructure cost curve becomes unsustainable.

The current trajectory leads toward:

- exploding GPU demand

- rising energy consumption

- massive capital expenditure

- thermal bottlenecks

- infrastructure centralization

- compute scarcity

Civilization-scale cognition cannot scale linearly with hardware growth alone.

A fundamentally different architecture is required.

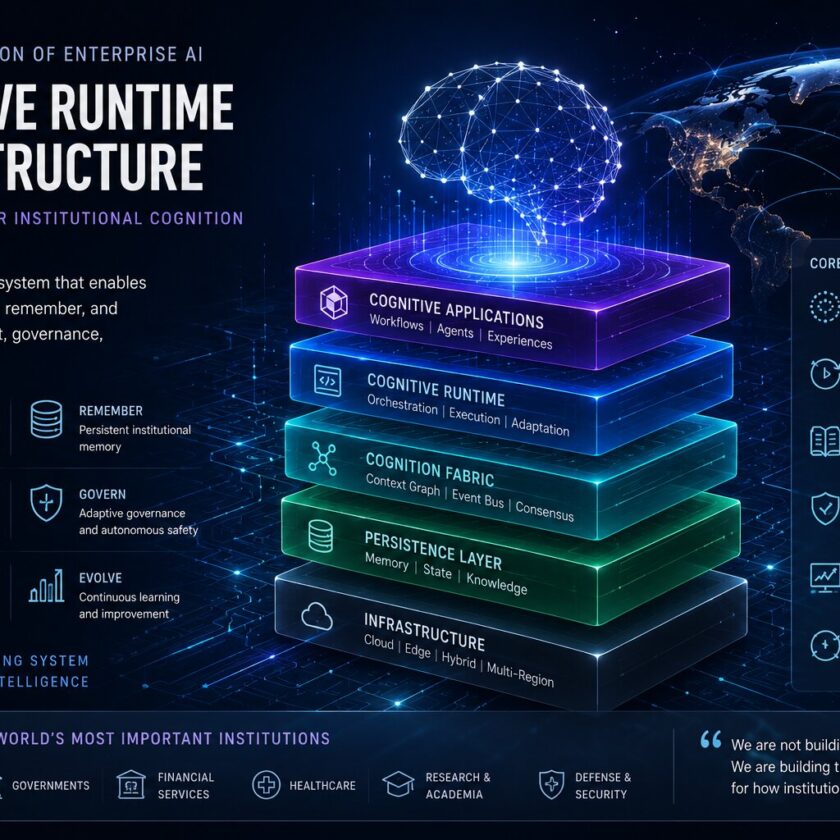

That architecture is beginning to emerge through:

Cognitive Runtime Infrastructure.

And its most important implication may be this:

The future of intelligence may depend less on infinite compute and more on intelligent orchestration.

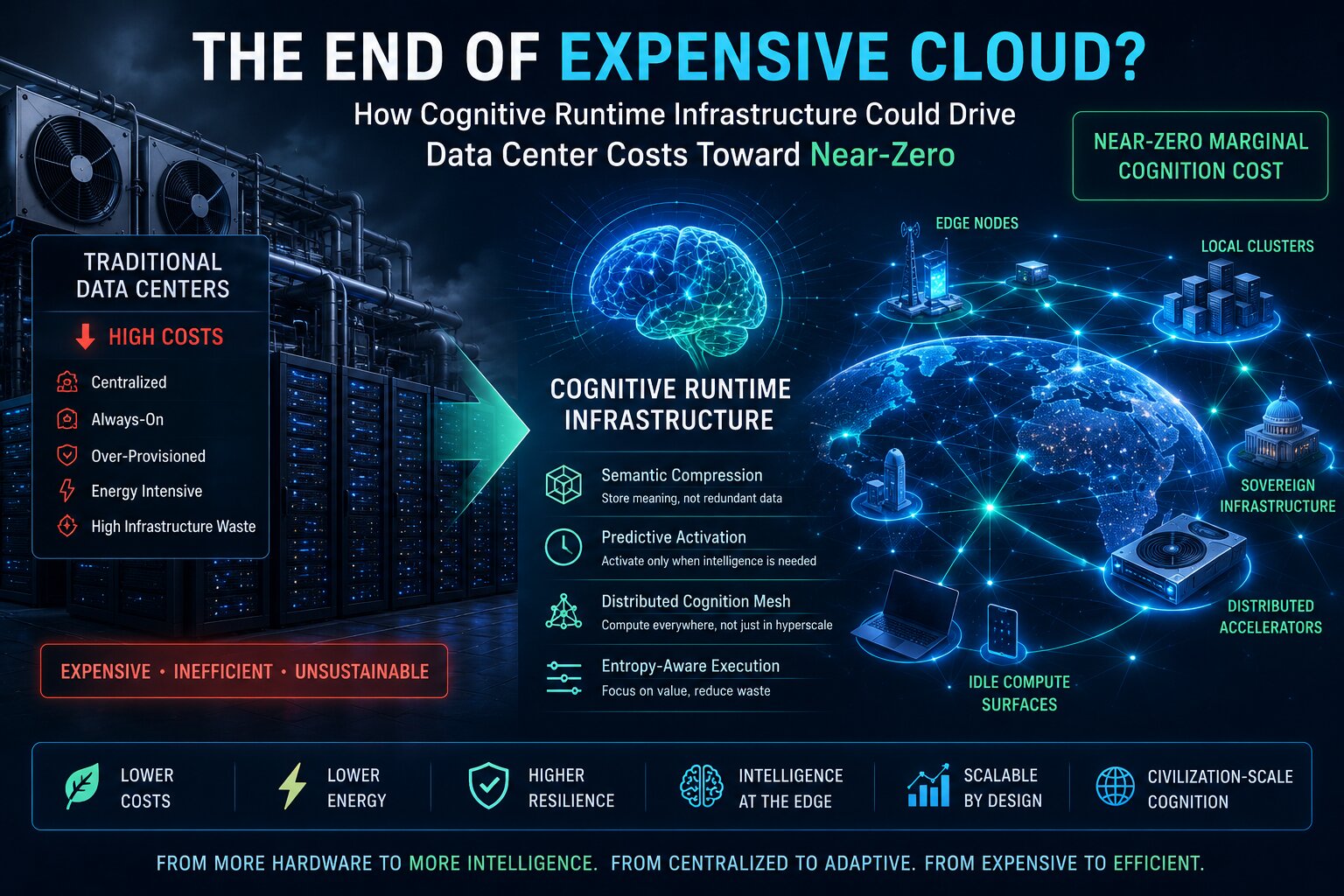

The Hidden Problem With Modern AI Infrastructure

Today’s AI systems are extraordinarily inefficient.

Most infrastructure assumes:

- constant availability

- full replication

- centralized inference

- persistent activation

- uniform execution priority

This creates enormous waste.

Large portions of modern compute infrastructure remain:

- idle

- underutilized

- overprovisioned

- poorly scheduled

- semantically unaware

Traditional systems optimize:

- CPU utilization

- storage throughput

- networking latency

But they do not optimize:

cognition itself.

That distinction changes everything.

The Shift From Compute-Centric to Cognition-Centric Infrastructure

Traditional cloud systems ask:

How do we scale compute?Cognitive runtime systems ask:

How do we maximize intelligence density per unit infrastructure cost?This leads to a radically different design philosophy.

The objective is no longer:

- maximum hardware

- maximum redundancy

- maximum replication

The objective becomes:

maximum cognition with minimum entropy.

The Four Architectural Shifts

The transition toward near-zero marginal cognition cost depends on four major infrastructure transformations.

1. Semantic Compression

Modern systems store enormous quantities of redundant information.

Cognitive runtime infrastructure introduces:

semantic compression.

Instead of storing:

- raw logs

- duplicate context

- repetitive state

- isolated events

the system stores:

- semantic meaning

- causal lineage

- contextual embeddings

- adaptive memory graphs

This dramatically reduces:

- storage overhead

- memory duplication

- synchronization costs

- retrieval complexity

The system remembers meaning rather than merely preserving raw data.

2. Predictive Activation

Most infrastructure today remains continuously active.

But civilization cognition does not require every subsystem to remain awake at all times.

Future runtime systems dynamically activate cognition only when:

- relevance increases

- entropy risk rises

- governance thresholds trigger

- strategic importance changes

This creates:

event-driven cognition activation.

Idle cognition collapses toward zero resource consumption.

3. Distributed Cognition Meshes

Current AI systems remain heavily centralized.

Future systems evolve toward:

distributed cognition fabrics.

Instead of routing all reasoning through hyperscale inference centers, cognition distributes across:

- edge systems

- local clusters

- autonomous nodes

- sovereign infrastructure

- distributed accelerators

- idle compute surfaces

This reduces:

- bandwidth pressure

- centralized bottlenecks

- energy concentration

- infrastructure fragility

The result is:

cognition locality optimization.

Reasoning happens closer to where intelligence is needed.

4. Entropy-Aware Execution

Traditional schedulers optimize:

- queues

- latency

- throughput

Future cognition systems optimize:

entropy reduction.

Execution engines increasingly prioritize:

- semantic value

- governance importance

- predictive relevance

- cognition density

- strategic significance

Low-value cognition becomes:

- compressed

- deferred

- summarized

- hibernated

- probabilistically reconstructed

This dramatically lowers infrastructure waste.

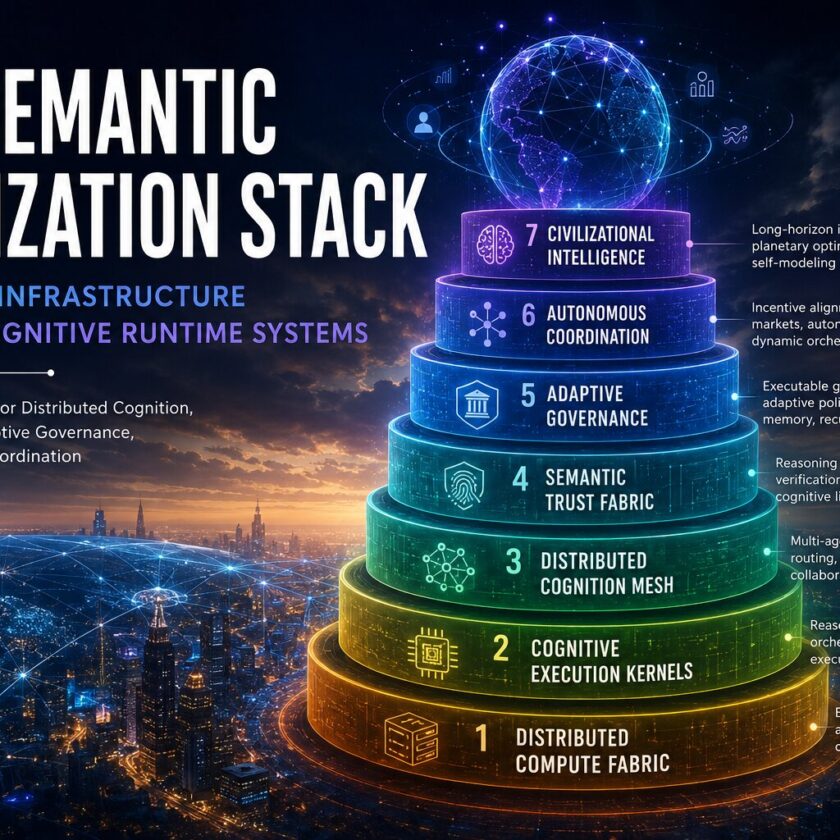

Why Centralized Hyperscale Infrastructure Eventually Breaks

The economics of current AI infrastructure are increasingly unstable.

Modern AI scaling depends on:

- larger GPU clusters

- larger cooling systems

- larger power grids

- larger centralized facilities

This creates structural vulnerabilities:

- energy concentration

- geopolitical dependence

- compute monopolization

- infrastructure fragility

- thermal scaling limits

Civilization-scale cognition cannot rely exclusively on centralized intelligence factories.

The future likely belongs to:

adaptive distributed cognition systems.

The Rise of Cognitive Infrastructure Economics

The next generation of infrastructure will increasingly behave like:

cognitive economies.

Instead of static resource allocation, runtime systems dynamically allocate:

- compute

- memory

- reasoning depth

- storage

- semantic persistence

- planning capacity

based on:

- cognition value

- strategic importance

- entropy reduction potential

- governance relevance

This creates:

cognition-aware economics.

Infrastructure becomes adaptive rather than static.

Why This Could Push Marginal Infrastructure Cost Toward Near-Zero

The phrase “near-zero cost” does not imply:

- free hardware

- infinite energy

- infinite compute

It means:

near-zero marginal cognition cost.

In other words:

Each additional unit of intelligence requires progressively less incremental infrastructure.

This happens through:

- semantic compression

- distributed execution

- adaptive scheduling

- cognition prioritization

- predictive orchestration

- memory synthesis

- local inference

- entropy optimization

The architecture becomes:

asymptotically efficient.

The Most Important Shift: From Hardware Scaling to Coordination Scaling

The next infrastructure revolution may not come primarily from:

- larger GPUs

- faster chips

- bigger datacenters

It may emerge from:

superior coordination architectures.

Human civilization currently wastes enormous cognition because:

- systems are disconnected

- memory is fragmented

- reasoning is isolated

- coordination is expensive

- governance is slow

- context is constantly lost

Cognitive runtime infrastructure attempts to unify:

- memory

- reasoning

- orchestration

- governance

- semantic continuity

into a continuously adaptive system.

This changes infrastructure economics fundamentally.

The Future Data Center

Future infrastructure may look radically different from today’s hyperscale model.

Instead of giant centralized facilities alone, cognition may emerge across:

- distributed edge fabrics

- semantic memory networks

- adaptive execution meshes

- autonomous coordination systems

- cognition marketplaces

- local inference clusters

The “data center” evolves into:

A planetary cognition substrate.

Final Thought

Cloud computing virtualized servers.

Artificial intelligence virtualized pattern recognition.

The next architectural leap may virtualize:

civilization cognition itself.

And when cognition becomes:

- distributed

- semantic

- adaptive

- orchestrated

- entropy-aware

the economics of intelligence may change as dramatically as the economics of information changed during the rise of the internet.

The future of infrastructure may therefore belong not to the systems with the most hardware

but to the systems with the most efficient cognition.